Interactive AI Tools Need Humans to Design Them

The growing world of AI has brought with it a wave of AI tools specifically designed to substitute for human involvement. For example, smart assistants are deployed in a range of areas to perform simple tasks, answer queries, and aid us in our everyday interactions with our devices. This Human-Computer Interaction (HCI) poses immense benefits within our professional and personal lives, from removing monotonous query response in customer assistance, to improving accessibility by connecting manual tasks to AI tools you can talk to fulfil them.

At the same time, AI applications are gaining a growing foothold within the world of design – from generating impressive artworks, to designing buildings efficiently and ensuring structural integrity. There is the potential that AI design tools could one day be tasked with designing the aesthetics for interactive tools, such as dictating the design choices for the appearance of smart assistance. While this seems like a natural area for AI to expand into, there is good reason to believe this could never work effectively, and the role of dictating design choices for interactive tools will, ultimately, remain a human one. So, why is this?

Real and fictional AI follows aesthetic conventions:

Whether we are aware or not, there are certain conventions within software, AI and robotics tools that heavily influence the ways in which we perceive (an in turn, react) to them. We often perceive ‘good’ AI tools to speak softly, and have an outward appearance of being soft, docile, and friendly. The opposite can often give us a perception of an AI tool that is bad or is not to be trusted. Think of a sci-fi show where the ‘kind, friendly’ AI shifts from a soft to a deep voice, as it’s LED suddenly shifts from blue or green to an ominous red. This isn’t just within fiction either – smart assistants like Siri, Alexa, Cortana, and Bixby are portrayed on our devices as sentient blue or white circles, with soft, feminine voices to communicate to us. In both fiction and reality, our perception of the difference between what a ‘good’ and ‘evil’ AI is has more to do with the way it looks or sounds than what it does. These visual and audible cues have a huge impact in the way we interact with these tools – imagine how uncomfortable you would feel if your smart assistant suddenly switched to a jagged red shape and altered its voice to be shouting.

Why does this happen?

Humans have designed interactive AI tools to replicate our human interactions. The implication of this is that we begin to blur the lines of human and non-human communication. In other words, the lines between talking to a human, and talking to an AI tool has blurred substantially. Humans don’t necessarily acknowledge that they are talking to lines of code when speaking to their smart assistants, but instead personify them – we see them as a conscious, thinking entity because the way they operate resonates with the ways in which we perceive other humans. Because of this, we can – unknowingly – apply our conventions of perceiving emotion and intention within our interactions to these non-human entities.

Whether consciously or subconsciously, humans understand certain emotive conventions in our interactions. We can point to what they are (I.e.: what examples of what colours, or tones of voice make us feel uneasy, and what ones we find engaging, calming, etc), but this is not to say that we can offer an explanation as to why they are (as in, why is blue or green a more engaging colour for the tools than red). These aesthetic conventions are well established - most people would describe blue as calming, and red as aggressive - and yet, we cannot easily explain why we perceive these human characteristics within non-human entities. With the blurred lines between Human and Human-Computer interaction, we begin to apply these same aesthetic conventions to tools that ultimately do not ‘feel’ the characteristics of which we perceive them to possess. As we project our human experiences onto these interactive tools, their aesthetic design has a strong influence on the ‘character’ that we create when perceiving them. If they possess aesthetic characteristics that we perceive negatively, we can unintentionally personify the tool with those negative traits, and therefore be uncomfortable with it.

Human-like AI can creep us out:

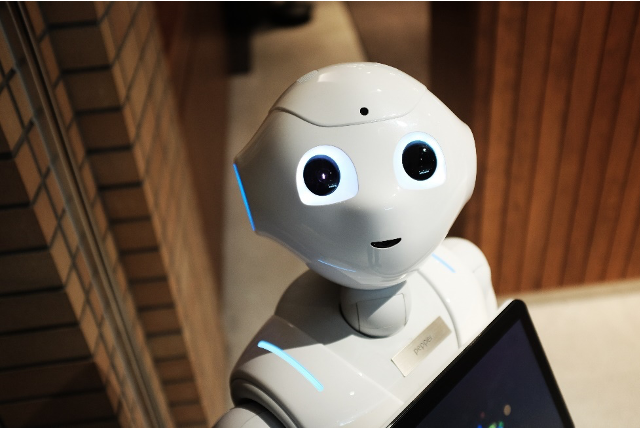

Similar problems arise within the world of AI and its applications within robotics tools, with the uncanny valley heavily dictating the design choices of human-like interactive robots. In short, the uncanny valley is the idea that, as non-human things approximate human characteristics, there is a point at which our perception suddenly shifts from empathy to repulsion. This is because, as they approximate familiar human characteristics, they hit a point of being uncanny – they appear somewhat familiar, but still possess an element of unfamiliarity, an ‘unknown’. As such, they can arouse a sense of discomfort, fear, or anxiety within us. This is important to consider, as this perception heavily influences our human limits to interact with these tools. The point at which a human-like figure becomes uncanny is not well defined, such that we cannot say with any certainty when something will become uncanny as it approximates a human look. Rather, we can only point to examples of things that appear uncanny to us.

A particular example of this is Sophia, the AI-powered robot designed by Hanson Robotics. Sophia has been showcased at many events since its unveiling in 2016 and has been met with many mixed reactions – from fascination to a feeling of discomfort. Sophia has a humanoid appearance and ability to portray a range of emotions, yet remains a level of falsehood – with technical components clearly exposed for those interacting with Sophia to see. At the same time, the choice of Sophia’s soft and ‘feminine’ appearance has led to the robot receiving far more attention and popularity than its ‘brother’, Han, a similar AI- powered robot designed with a ‘spooky’ realistic male perception in mind.

Final word - What are the implications of this?

Ultimately, the ways in which we can interact with AI tools is dictated by rules which we cannot easily define. What this means is that we cannot explain why particular aspects of design cause us to feel either positively or negatively, but merely point to examples of what design aspects we like, and what we dislike. As such, when it comes to any design of these tools, there will always need humans to perceive, judge, and react to these tools to highlight aspects which bring us comfort, and ones that cause us to feel uncomfortable. It is only with this human aspect of the design process that we can ensure that the interactive tools created are engaging and not off-putting, so that they may fulfil their roles effectively, without creeping us out, and putting us off interacting with them.