Speed Is Not a Strategy: Why Accuracy Must Come First in Enterprise AI

There is a conversation happening in boardrooms across the UK and Europe, and it usually goes something like this: "We want AI that gives us answers fast." Speed is intuitive. Speed is measurable. Speed feels like the right proxy for a system that is working.

It is also, in almost every case, the wrong place to start.

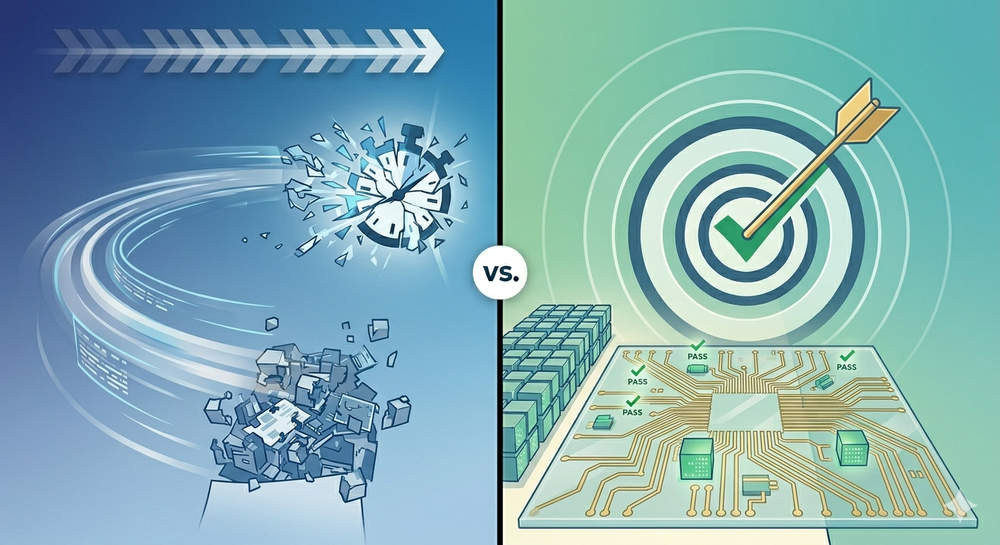

The most consequential decision you will make when deploying AI in your organisation is not about how quickly it responds. It is about whether it responds correctly. And the two are not the same problem, do not require the same solution, and should not be optimised at the same time.

This article is about that tradeoff, what it actually means, why the order of operations matters enormously, and why the pressure to lead with latency is one of the most reliable paths to an AI project that quietly fails.

The Tradeoff, Properly Defined

Latency and accuracy are two axes on which any AI system can be evaluated. Latency measures how quickly the system returns a response. Accuracy measures whether that response is correct, useful, and appropriate for the domain it is serving.

The mistake most organisations make is treating these as equivalent engineering concerns to be balanced simultaneously. In practice, they are sequential problems. You cannot meaningfully optimise the speed of a wrong answer.

Consider a concrete example. A utilities company deploys an AI assistant to help field engineers interpret sensor data and flag anomalies. If the system responds in 400 milliseconds but misclassifies a pressure variance that precedes equipment failure, the speed is irrelevant, and potentially dangerous. If it responds in four seconds and correctly identifies the anomaly, the engineer has something to act on.

This is not an argument against fast AI. Fast, accurate AI is the goal. The argument is about sequencing: accuracy is a prerequisite for latency optimisation to have any value.

Why Accuracy Is Harder Than It Looks

Senior decision-makers often underestimate how difficult domain-specific accuracy is to achieve, because modern AI models look impressive out of the box. Ask a frontier model a general question and the response is fluent, confident, and often correct.

Enterprise use cases are different. The gap between a model that sounds right and a model that is right for your domain is significant, and crossing it requires deliberate engineering.

There are several established approaches to getting accuracy right, each suited to different types of problems.

Retrieval-Augmented Generation (RAG) connects a language model to your organisation's proprietary data: documents, databases, operational records, historical outputs, so that responses are grounded in your actual information rather than the model's general training. For organisations with large bodies of internal knowledge, this is often the most important accuracy lever available.

Fine-tuning goes further: it adapts the model's underlying behaviour using labelled examples from your domain. Where RAG gives the model access to your information, fine-tuning changes how the model reasons about it. For highly specialised domains - legal, engineering, scientific, clinical - this can be the difference between a system that is useful and one that is not.

Structured evaluation pipelines are how you measure accuracy before you deploy. This means defining what a correct response looks like in your domain, building test sets that reflect real-world edge cases, and running systematic assessments rather than ad hoc spot checks. Without this, you cannot know whether your accuracy is good enough to justify deployment, let alone optimisation.

Human-in-the-loop feedback closes the loop after deployment. No model is perfect at launch; what matters is whether the system learns from the gap between what it produces and what your domain experts would have produced instead. The feedback mechanism is as important as the model itself.

These approaches are not mutually exclusive. Production-grade AI systems typically combine all of them. The common thread is that each one requires time, domain expertise, and a clear definition of what correct actually means in context.

The Cost of Getting the Order Wrong

When organisations optimise for latency before accuracy is established, two things tend to happen.

The first is that inaccurate outputs become normalised. Users experience errors, develop workarounds, and stop trusting the system. In enterprise contexts, trust is extraordinarily difficult to rebuild once lost. A fast AI that is wrong often enough will be abandoned — not dramatically, but gradually, as people quietly stop using it.

The second is that latency optimisation applied to an inaccurate system can make the underlying problem harder to fix. Techniques like model quantisation, response caching, and smaller model substitution all involve trade-offs that can further constrain accuracy. Optimising first means locking in architectural decisions before the accuracy baseline is properly established.

The reverse sequence, get accuracy right, then optimise speed, does not carry the same risks. A slower system that is correct can be sped up. An inaccurate system that is fast is a different kind of problem.

The Good News About Latency

Here is the most important thing to understand about latency in 2025 and beyond: the models are getting faster whether you engineer for it or not.

Inference speed for frontier AI models has improved dramatically over the past two years. The response time that required significant infrastructure investment in 2023 is now available by default. Hardware improvements, advances in inference optimisation, and competition between model providers are compressing latency across the board at a pace that shows no signs of slowing.

This means that for most enterprise applications, latency is increasingly a solved or near-solved problem at the infrastructure level. What it does not mean is that accuracy improves automatically. Accuracy in your domain - against your data, for your use cases, validated by your domain experts - is the one thing that does not come out of the box and does not improve without deliberate investment.

The asymmetry is important. Latency is converging toward a commodity. Accuracy is not.

What the Sequencing Looks Like in Practice

Getting accuracy right before optimising latency is not just a philosophical position. It implies a specific order of work.

The starting point is definition: what does correct look like for this use case? This sounds straightforward and rarely is. The answer requires conversations between technical teams and domain experts, and it produces the evaluation criteria that everything else depends on.

From there, the work is iterative: build a version of the system, measure its accuracy against your defined criteria, identify where it fails, apply the appropriate intervention, whether RAG, fine-tuning, prompt engineering, or structural redesign, and measure again. This cycle continues until the accuracy threshold is met.

Only at that point does latency optimisation become a meaningful engineering investment. By then, in many cases, the infrastructure improvements in the underlying models will have already delivered much of the latency reduction without additional effort.

The organisations that get this right tend to have one thing in common: they treat accuracy as a business requirement, not a technical afterthought. They define it, measure it, and hold the system accountable to it before they ask how fast it runs.

That discipline is what separates AI that compounds value over time from AI that generates impressive demos and then slowly disappears from operational use.

Speed matters. But first, the answer has to be right.